What is data profiling in ETL?

Data profiling is a critical process in data management, particularly in ETL (Extract, Transform, Load) and data quality management. Profiling enables businesses to understand the structure, content, and quality of data within their systems. In this article, we’ll explore the role of data profiling in ensuring data quality, delve into various types of data profiling, best practices, and share examples to illustrate its importance.

What does data profiling achieve?

Data profiling assesses data for quality, consistency, and suitability before it moves through ETL pipelines. In an ETL context, profiling helps data engineers identify data anomalies, missing values, duplications, and outliers early, allowing them to make corrections and adjustments in the ETL process itself. The primary objectives of data profiling are:

- Assessing Data Quality: Uncover inconsistencies, incomplete data, or duplicate records to improve data quality.

- Data Transformation Guidance: Help determine what transformations (cleansing, standardization) are needed before data is integrated or loaded.

- Understanding Data Structure: Identify the relationships, dependencies, and structures within datasets for better schema design and metadata management.

Types of Data Profiling

1. Column Profiling:

This involves analyzing each column in a dataset to determine basic metrics like minimum, maximum, mean, median, and standard deviation. It identifies characteristics such as data type, value distribution, and the presence of null values.

Example: Consider a customer_age column in a customer database. Column profiling might reveal the following:

Metric | Value |

Min Value | 18 |

Max Value | 75 |

Null Count | 12 |

Data Type | Integer |

Such metrics help identify if customer_age has unexpected nulls or invalid data types.

2. Data Type Profiling:

Involves checking if the data in each field aligns with the expected data type (e.g., integer, text, date). This is essential in ETL to ensure transformations operate on consistent data types, reducing errors in data manipulation.

Example: In a transaction table, a transaction_date column should have only date data types. Data type profiling would flag any string values mistakenly entered.

3. Pattern Profiling:

Analyzes data for patterns within values. This is particularly useful for fields like phone numbers, social security numbers, or email addresses, where values should follow specific formats.

Example: An email column in an employee dataset could use pattern profiling to confirm that all entries match a regular expression pattern like [a-zA-Z0-9._%+-]+@[a-zA-Z0-9.-]+\.[a-zA-Z]{2,6}. Pattern profiling can flag entries that do not match, helping cleanse invalid emails from the dataset.

4.Dependency Profiling:

Examines relationships and dependencies between columns to understand correlations. This helps verify if certain fields are dependent on others, which can be crucial for relational integrity.

Example: In a customer orders dataset, order_total might be expected to be a sum of individual product prices in a given order_id. Dependency profiling helps confirm if this assumption holds.

5.Uniqueness and Duplicate Profiling:

Focuses on identifying duplicate or unique values within a dataset. This is essential in ETL workflows to ensure accurate, duplicate-free records in data warehouses.

Example: A customer_id column in the customers table should ideally contain unique values to ensure customer data integrity.

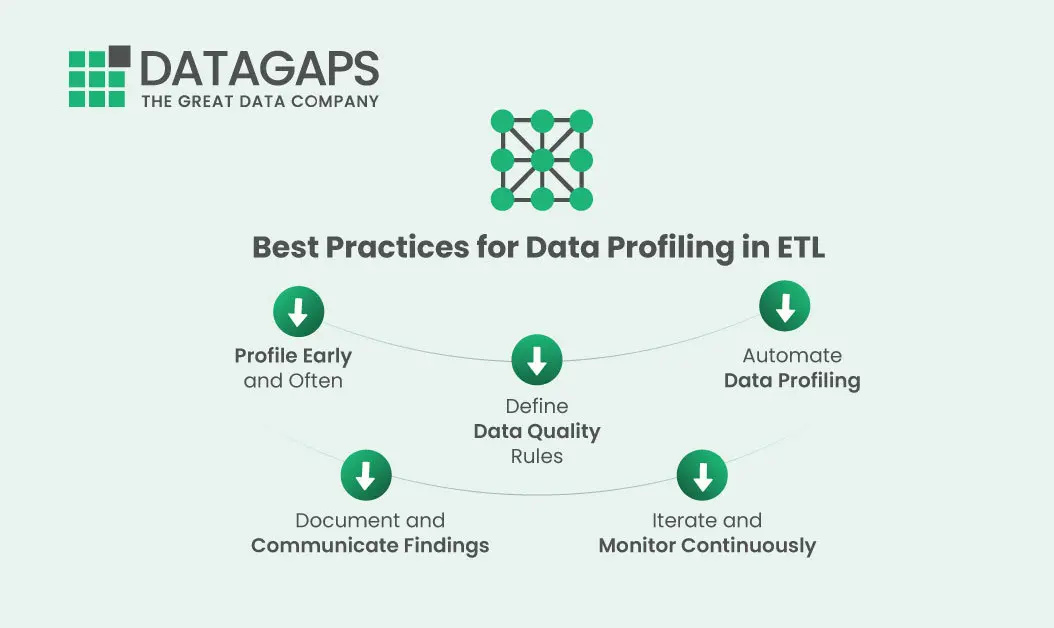

Top 5 Best Practices for Data Profiling in ETL

1. Profile Early and Often

Integrate profiling at multiple stages in the ETL process to identify and correct quality issues at the source, during transformation, and before loading. Profiling early minimizes downstream errors.

2. Define Data Quality Rules

Establish rules that define what constitutes quality data, such as acceptable ranges for numerical data, mandatory field presence, and consistent data types. These rules should guide your profiling and help standardize data across sources.

3. Automate Data Profiling

Automation tools can make profiling more efficient and repeatable. Tools like Talend, Informatica, and Apache Griffin have built-in profiling features. Automation reduces manual effort and ensures profiling occurs consistently.

4. Document and Communicate Findings

Profiling generates valuable insights that should be shared with all data stakeholders. Documenting profiling results can inform downstream teams about data health, enhancing data governance.

5. Iterate and Monitor Continuously

As data evolves, continuous profiling and monitoring are essential to maintain data quality. Scheduling regular profiling checks enables proactive detection and resolution of emerging issues.

Start improving your data quality now!

Ensure data quality and streamline your ETL process with Datagaps DataOps Suite.

Try our tools to boost efficiency today