In a world where data drives every decision, the biggest threats often come from what we don’t see. Most data teams are fighting yesterday’s war. While they chase missing values and duplicates, the real destroyers are already inside their systems, invisible and multiplying. Which is why organizations must invest in monitoring unknown data issues to safeguard their systems from silent failures and costly disruptions.

Data Quality (DQ) issue management teams build validation frameworks to tackle known problems—missing values, duplicates, or format mismatches. But, the severe disruptions happen from unknowns like the schema changes no one anticipated, the column length tweaks that quietly break downstream systems, or the unexpected nulls that derail test cases? These are the data disasters waiting to happen.

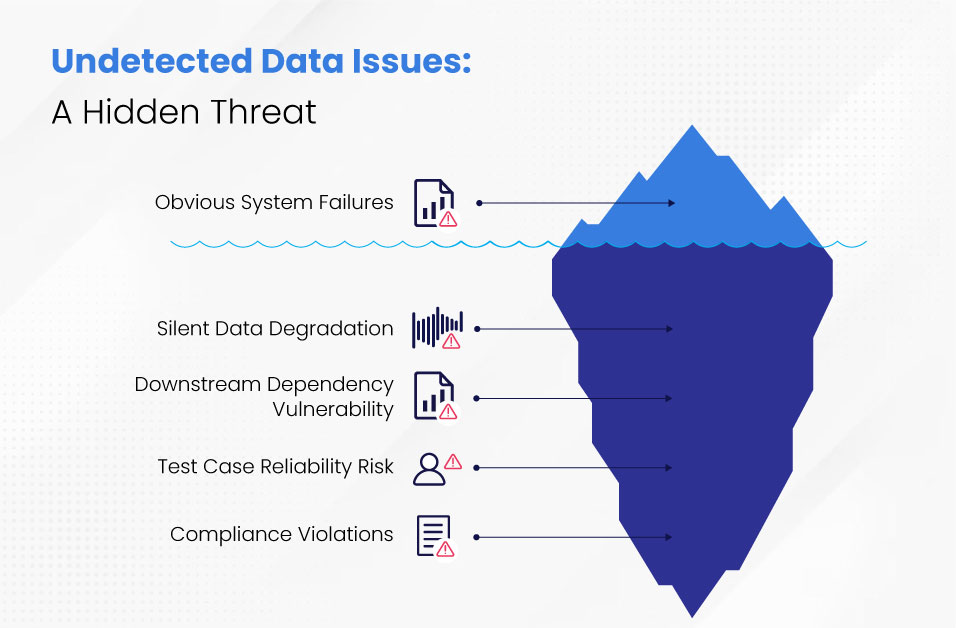

What Happens When Unknown Data Issues Go Undetected

1. Silent Failures Are the Most Dangerous

Unlike obvious system failures, these degradation patterns erode trust one decision at a time, compounding damage across every downstream process that relies on compromised data.

2. Downstream Dependencies Are Vulnerable

Modern data ecosystems are deeply interconnected. A single schema change in one source can propagate through rest of the systems breaking ETL pipelines, corrupting dashboards, and derailing machine learning models.

3. Test Case Reliability Is at Risk

“How to prevent test case failures due to schema drift?” The answer lies in early detection. If a column is modified and this change isn’t flagged, entire test suites can fail, delaying releases and increasing costs.

Organizations spend 40% of their development cycles on data-related rework because they detect structural changes after damage occurs, not before it spreads.

4. Compliance Is Non-Negotiable

In regulated industries like banking and healthcare, data integrity isn’t optional. Unknown issues can lead to non-compliance, audit failures, regulatory penalties and reputational damage that can end careers and close divisions

What Are Unknown Data Issues?

Unknown data issues are anomalies that occur without immediate detection. Unlike traditional data quality problems, they’re not flagged by standard validation rules and often go unnoticed until they cause real damage. These can include:

- Schema drift (e.g., column renaming, type changes)

- Unexpected data distribution shifts

- Format inconsistencies

- Silent truncation due to column length mismatches

Unpacking unknown issues through schema drift

While unknown data issues include distribution shifts, format inconsistencies, and silent truncation, schema drift represents the most common and impactful category affecting 70% of data pipeline failures. The monitoring approach we’ll outline applies universally, but we’ll use schema drift as our primary example since it illustrates the broader detection challenge facing modern data teams.

Let us consider 2 examples

Example 1: Column Length Expansion

A source system increases email field length from 50 to 100 characters. Your data warehouse still expects 50 characters, causing silent truncation that corrupts customer records without generating alerts.

Example 2: Field Renaming

The field name 'email' changes to 'user_email', breaking transformations across multiple systems

Original Data (Day 1)

json

{

“customer_id”: “C123”,

“name”: “Ravi Kumar”,

“email“: “ravi.kumar@example.com”,

“signup_date”: “2025-01-10”

}

Drifted Data (Day 45)

json

{

“customer_id”: “C123”,

“name”: “Ravi Kumar”,

“user_email“: “ravi.kumar@example.com”,

“signup_date”: “2025-01-10”

}

What Goes Wrong

- Your ETL pipeline is configured to extract the email field. Since it no longer exists, the pipeline either:

- Skips the record entirely

- Inserts a null value for email

- Fails silently, depending on error handling

- Downstream systems like CRM or marketing tools that rely on email for communication or segmentation now receive incomplete customer profiles.

- Dashboards showing customer engagement metrics display blanks or drop users from email-based filters.

- Compliance systems tracking consent miss critical records when fields disappear, risking regulatory violations.

- Cross-team collaboration breaks down as data engineers spend 40% more time troubleshooting pipeline failures while business analysts lose trust in reports when metrics suddenly drop without explanation, creating a cycle of emergency audits and manual reconciliation work that consumes both teams’ strategic capacity.

Metrics to Measure Schema Drift Impact

1. Drift Frequency

Definition: How often schema changes occur in the source systems.

Metric: Number of schema changes per month or per data source.

2. Drift Detection Latency

Definition: Time taken to detect schema drift after it occurs.

Metric: Average time (in hours or days) between drift occurrence and detection.

3. Pipeline Failure Rate

Definition: TPercentage of ETL jobs or data pipelines that fail due to schema drift.

Metric: (Failed jobs due to drift / Total jobs) × 100

4. Data Loss Rate

Definition: Volume or percentage of data lost or corrupted due to schema mismatches.

Metric: (Lost or malformed records / Total records processed) × 100

5. Test Case Failure Rate

Definition: Number of test cases that fail due to schema inconsistencies.

Metric: (Drift-related test failures / Total test cases) × 100

6. Business Impact Score

Definition: Weighted score based on affected KPIs (e.g., revenue, customer experience, compliance).

Metric: Custom scale (1–10) based on severity and scope of impact.

7. Schema Compatibility Score

Definition: Degree to which the solution supports backward and forward compatibility.

Metric: Score based on schema registry validations or compatibility checks.

The Solution: Proactive Schema Detection Through Data Observability

Two core solution components address the detection gaps:

1. Monitoring via Data Observability dashboards

2. Maintaining schema registry

Section: Implementing Data Observability as your Solution

When a column changes in source files, a robust observability platform would:

- Detect the schema drift instantly

- Log it in the Data Quality catalog

- Alert stakeholders

- Map all downstream dependencies

- Pause or reroute test execution to prevent failures

This proactive approach transforms unknowns into manageable knowns giving teams the visibility they need to act before damage occurs.

Solution Impact: Before vs. After Implementation -Schema Drift

- A column is renamed in the source system

- ETL jobs fail silently or produce incorrect results

- Dashboards show blank fields and misleading metrics

- Test cases fail unexpectedly, delaying releases

- The change is detected and logged within minutes

- Impact analysis identifies affected systems

- Teams implement fixes before production deployment

- Downstream systems receive clean, consistent data

A Strategic Framework for Proactive Data Issue Management

1. Adopt Data Observability Tools

Implement platforms that offer real-time monitoring, schema drift detection, and anomaly alerts. These tools act as your early warning system.

2. Integrate detection with Test Automation

Connect test automation frameworks directly to data quality catalogs. If a schema change is detected, test cases should be flagged or paused automatically.

3. Schema Diff Automation

- Run automated schema difference checks between environments (e.g., dev vs prod) before test execution.

- Flag and isolate tests that depend on changed fields.

4. Maintain a Centralized DQ Catalog

Track all known and unknown issues, schema changes, and resolution history in one place. This becomes your single source for data reliability.

5. Conduct Impact Analysis

When changes are detected, assess which systems, reports, or models are affected. This helps with prioritizing fixes and avoiding surprises.

6. Establish Governance Protocols

Define clear workflows for handling schema changes, including approvals, rollback mechanisms, and communication plans.

Final Thoughts – The Path Forward

Monitoring unknown data issues isn’t just a technical best practice. It is a strategic imperative. In a data-driven world, the cost of ignoring silent anomalies can be catastrophic. Just like insurance protects us from the unexpected, data observability protects our systems from silent data failures.

The choice is clear: implement proactive data observability frameworks with robust detection capabilities now, or continue discovering failures through customer complaints and broken dashboards

Talk to a Datagaps Expert

Discover how data observability helps identify hidden data issues like schema drift, prevents silent failures, and ensures trusted, reliable data across your pipelines.